Get Started in Immersive Audio with These Mixing Tips and Spatial Audio Music Production Techniques

The following information on immersive audio is excerpted from the Berklee Online course Immersive Audio Post Production and Mixing Techniques, written by Dan Pfeiffer, and currently enrolling.

Even though humans have been experiencing “immersive” sound in the natural world since the dawn of time, there is still vast uncharted territory in the creative possibilities of music production for immersive formats. Paradoxically, it wasn’t until the invention of sound recording that we first lost immersion—mono reduced the world to a single-point source, becoming the baseline from which later developments worked to rebuild dimensionality.

In contemporary practice, four broad categories of immersive music production have emerged. The first centers on an authentic, often hyper-realistic reproduction of an acoustic environment, exemplified by Morten Lindberg’s recordings that place the listener inside a vivid, enveloping performance space in the midst of the performers.

The second involves reimagining material originally conceived for mono or stereo, which requires partially deconstructing a work built around a two-dimensional soundstage; the Beatles Atmos remixes demonstrate both the potential and the controversy of this approach.

The third category includes modern productions created in stereo but with an awareness that immersive expansion is possible—Jacob Collier, for instance, often writes with an implicit three-dimensional sensibility even in a stereo workflow.

The fourth and final category consists of music conceived explicitly for immersive playback, where the immersive version is the primary artistic statement and the stereo mix is a reduced translation.

In this article, we will focus particularly on the fourth category, while recognizing that the techniques and concepts presented here meaningfully enhance the immersive experience across all forms of immersive music production.

Staging and Pan Automation

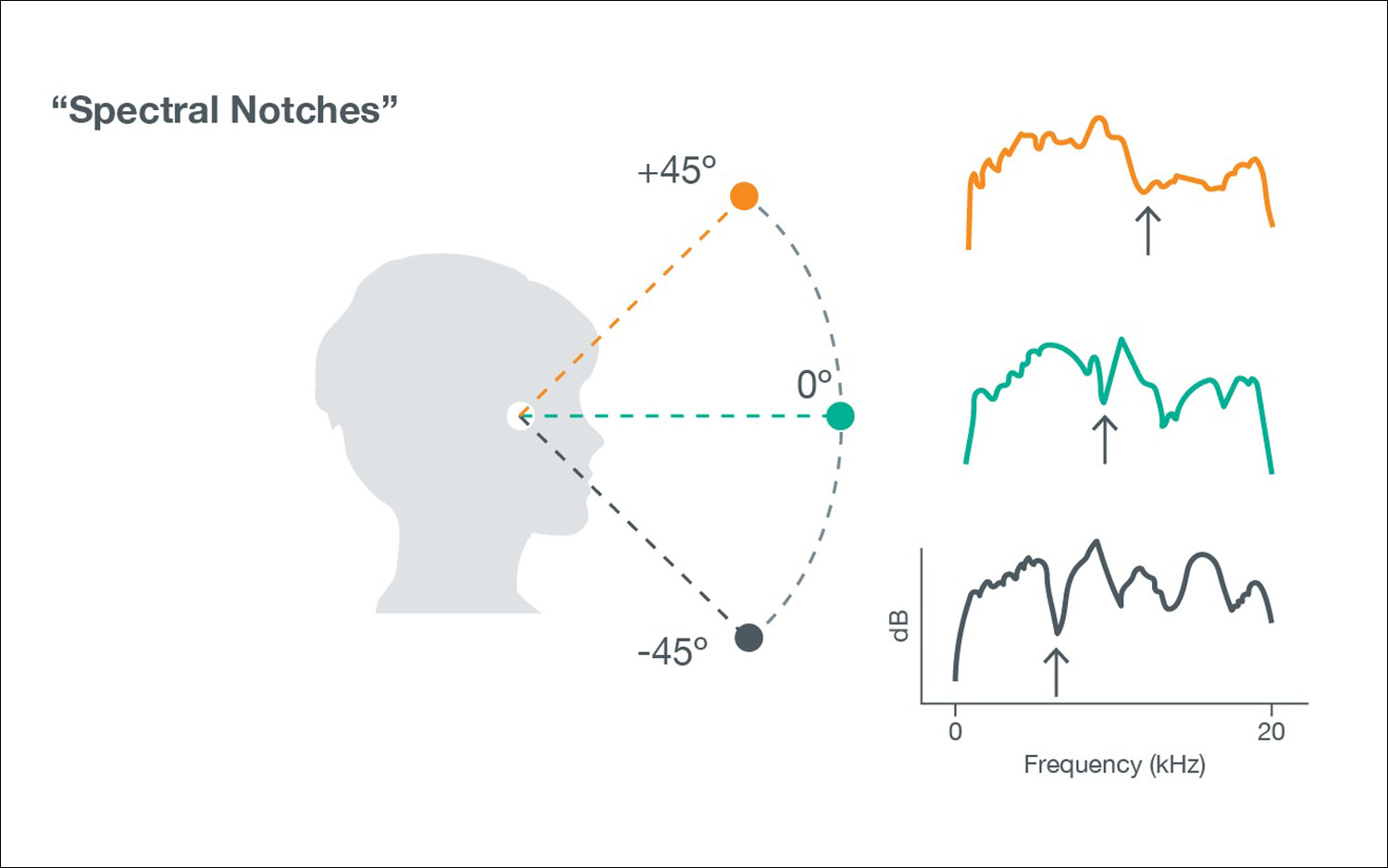

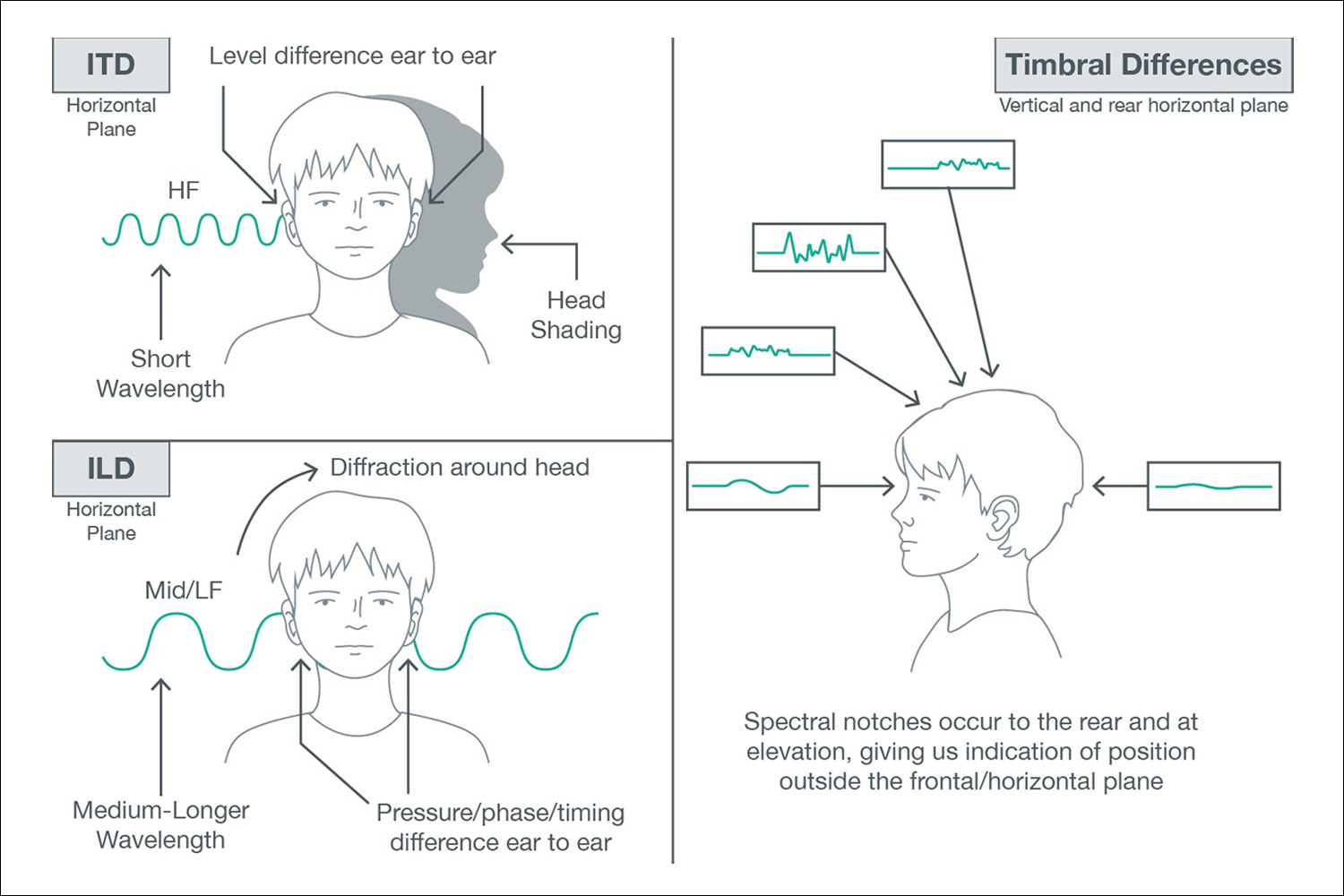

When we start exploring immersive audio, we’ll always return to the same foundation: human instinct and the way our senses constantly interpret and contextualize the world around us. It’s worth reviewing the essential patterns. Sounds in front of us command the greatest share of our auditory attention. Sounds arriving from behind are perceived as lower in level and softer in high-frequency content, but important (vocals/solos) or sudden sounds from the rear can command a strong response. Elevation cues come from the subtle filtering/notching effects of our outer ears—spectral notching—an individualized set of timbral signatures that tell the brain whether something is above or below us.

And high-frequency-rich sounds anchor themselves most clearly in space because their timbral details make directional differences more obvious to our auditory system.

When we stage a recording in three dimensions, these instincts matter. If we want the listener engaged and enveloped, we tend to place the core musical elements—the ones we want people to focus on—toward the front. We extend the traditional left–right panorama outward into a wider horizontal field, wrapping the listener without sacrificing clarity. Supporting material that enhances a sense of space often lives to the sides and rear, while height channels and special effects provide vertical dimension or textural sparkle without pulling focus from the musical narrative. Percussion, transitional gestures, and atmospheric elements can be placed with more creativity, so long as they contribute to immersion rather than distraction.

But to truly deepen our understanding of staging, we need to talk about motion. Any movement in the auditory field captures attention in a distinct way. It’s not far removed from what we achieve in stereo with carefully shaped level automation—a subtle push that makes one voice momentarily stand out. Immersive formats simply allow us to reimagine that toolset. A sound that passes by us—front to back, back to front, or across the listening plane—automatically draws more cognitive bandwidth. Our brains are wired to track motion.

Macro Motion

In immersive mixing, there are two broad categories for 3D motion-based panning: macro and micro motion.

Macro motion is a large gesture that sweeps a sound over a large area. Typically, these gestures are for primary sonic components that are meant to captivate. An example would be a riser like the famous THX theme that could come from the rear height and pan forward and down to the front over its course. If we turn again toward Jacob Collier, you can hear this at the 51-second mark in the mix of “100,000 Voices.”

Macro motion usually captivates our attention and has the potential to add dramatic effect to an already dramatic musical moment.

Micro Motion

By contrast, micro motion is a small, often barely perceptible amount of movement applied to an element in the mix to increase presence through auditory interest. Dolby Music offers a particularly powerful approach here, using subtle, often tempo-related pan gestures that don’t dramatically reposition a source but give it just enough spatial life to remain engaging. These movements operate almost below the level of conscious attention, yet the listener feels them. Rather than relying on fader rides that can increase masking or reduce dynamic contrast, micro motion lets the mix breathe. It adds vitality, preserves dynamic range, and creates a more fluid, engaging soundfield—one that feels alive without calling overt attention to its own mechanics. By tying the motion to the song’s tempo, it becomes woven into the rhythmic fabric of the production.

Listen to these two excerpts. On desktop, drag each option to the correct target. On mobile, tap an option to select it, then tap the correct target. Place one option on Static and the other on Tempo-Based Circular Motion. Try it with speakers and with headphones to see which is easier to spot the difference.

The optimal experience of the latter example with motion is in a room with speakers. The translation of this motion and its effects for the listener are heard to varying degrees on headphones and other consumer playback systems like sound bars, but the effect remains: the shaker’s presence in the mix is very subtly increased based not on level increase, but on our perception that it is moving.

Ultimately, motion in immersive mixing is not about spectacle for its own sake, but about working with the way humans naturally listen. By understanding how attention responds to position, movement, and timbre, we can use panning automation—especially micro motion—to bring elements to life, guide focus, and maintain clarity without disrupting musical balance. When applied thoughtfully, spatial motion becomes an expressive extension of the mix itself, reinforcing the musical narrative while remaining felt more than heard.

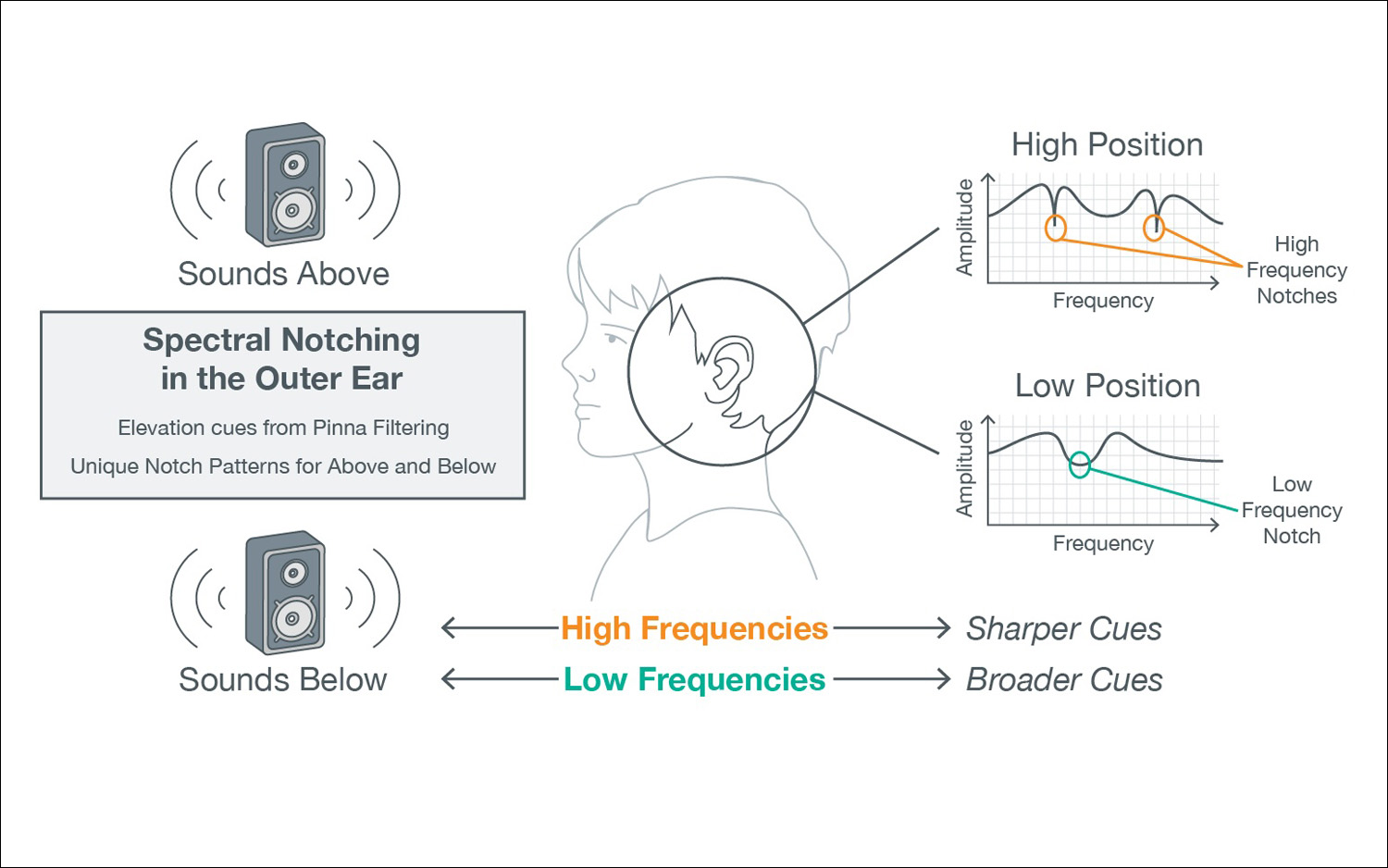

The Height and Percussion

Sounds that originate from elevated positions tend to contain proportionally more high-frequency energy by the time they reach the listener. This is largely due to the way our pinnae (outer ears) filter incoming sound. When sound arrives from above, it interacts with the ridges and cavities of the pinnae in a way that emphasizes certain high-frequency bands while attenuating others. These frequency-dependent changes—rather than timing or level differences—are what allow us to infer elevation.

High frequencies are also easier to localize because of their shorter wavelengths. While interaural time differences (ITDs) and interaural level differences (ILDs) are extremely effective for left–right localization, they become far less useful in the vertical plane due to the vertical symmetry of the ears. As a result, vertical localization relies almost entirely on spectral cues—specifically, subtle notches and peaks in the frequency response caused by the interaction of sound with the outer ear.

In the natural world, many sounds we associate with “above” are transient and rich in high-frequency content: birds, insects, rustling leaves, rain, wind in trees, or reflections from ceilings and canopies. In musical contexts, elevated sounds often include overhead percussion, cymbals, shakers, chimes, hand percussion, or the upper harmonics of instruments reflecting off architectural surfaces. These associations prime the listener to interpret high-frequency, percussive material as elevated, even in reproduced sound fields.

Try a simple experiment: Wherever you are, snap or clap directly in front of you, then repeat the gesture above your head. Even without visual input, the elevated version will feel different—brighter, less grounded, and less precisely localized. This illustrates an important limitation of human hearing: we are not naturally very good at determining exact vertical position. Unlike owls (whose ear openings are vertically offset to create up–down timing and level differences), humans must rely almost entirely on timbral change to perceive elevation.

In immersive production, this limitation becomes an opportunity. Because the brain is relatively tolerant of ambiguity in the vertical plane, a substantial amount of sound can be placed in the height layer without calling attention to itself. If elevated material is well-blended with the front and surround layers, the listener may not consciously notice “sound from above,” yet will still perceive a larger, more three-dimensional space. Even partial elevation—such as 30–50 percent height panning—can contribute significant vertical energy while preserving mix cohesion.

A second, more explicit strategy is to intentionally place sources with strong high-frequency content and clear transient information into the height layer. Percussive and rhythmic sounds are especially effective because their fast attack times and spectral brightness make them easier to localize and more perceptually salient. When these elements appear overhead, the listener is far more likely to register the existence of a vertical dimension in the mix.

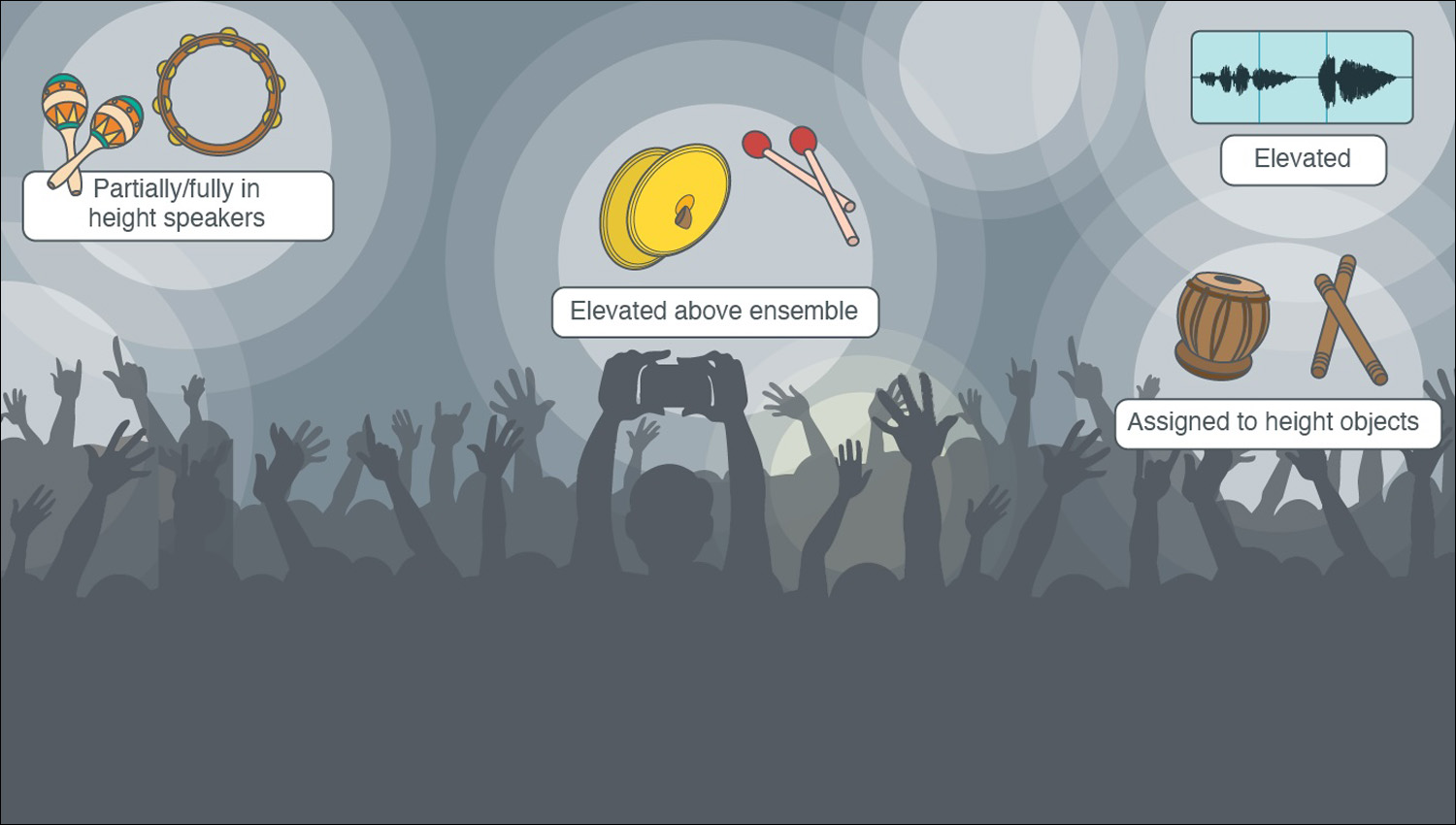

Common and effective examples include:

- • Shakers, tambourines, or cabasas placed partially or fully in the height speakers

- • Cymbal swells, mallet rolls, or auxiliary percussion elevated above the ensemble

- • Short percussive effects (claves, wood blocks, tabla strokes) assigned to height objects

- • High-frequency rhythmic synths or arpeggiated textures elevated to reinforce groove and motion

In practice, these elements do not need to be loud to be effective. Small amounts of elevated, high-frequency, transient-rich material can dramatically enhance the sense of envelopment and spatial realism. When used thoughtfully, height panning becomes less about novelty and more about reinforcing the physical plausibility and dimensionality of the sound field.

Background Vocals

In immersive mixing formats, background vocals serve not only a musical and emotional function, but also a critical spatial and perceptual role in production. Human hearing is optimized to prioritize vocal intelligibility in the frontal plane—particularly in the 2–5 kHz range—while sensitivity to those same frequencies is reduced in the rear and vertical axes. For this reason, lead vocals are typically anchored to the front center to preserve narrative focus and clarity. Background vocals, however, present an ideal opportunity to exploit the expanded spatial field available in immersive audio.

One of the primary advantages of working in immersive formats at the production stage is the ability to design vocal arrangements spatially, rather than stacking multiple instances of the human voice into the same stereo or channel-based location. When several vocal layers occupy the same perceptual space, they compete for overlapping frequency content—especially in the midrange where vocal energy is densest—resulting in masking, reduced clarity, and diminished harmonic definition. Even with careful EQ, tightly stacked background vocals can blur together perceptually.

By distributing background vocal layers across the horizontal, surround, and height dimensions, producers can significantly reduce masking before any detailed mixing decisions are made. Spatial separation allows the listeners’ auditory system to distinguish individual voices more easily, preserving the identity of harmonic parts. This separation increases the perceived clarity of the harmonic texture while also allowing for greater overall intensity, since more vocal energy can be introduced without overcrowding the same perceptual space.

Immersive formats also enable producers to scale vocal energy as part of the arrangement itself. Choruses can expand outward and upward as additional background vocal layers are introduced into the surround and height speakers, creating a sense of physical growth and emotional lift. Verses, by contrast, may collapse back toward the front center, restoring intimacy and focus. In this way, spatial expansion and contraction become arrangement tools as powerful as orchestration, dynamics, or register.

From a psychoacoustic perspective, spatially distributing vocal harmonies enhances harmonic clarity through separation. Instead of relying solely on pitch, voicing, and timbre to differentiate chord tones, the listener is given spatial cues that help organize the harmonic structure. This allows dense vocal writing—such as clusters, suspensions, or gospel and jazz-influenced harmony stacks—to remain intelligible and emotionally impactful in ways that are difficult to achieve in traditional stereo production.

In immersive production, background vocals are therefore not merely decorative layers added at the mixing stage, but structural elements of the arrangement itself. When conceived and placed intentionally, they reduce masking, increase clarity, heighten emotional intensity, and fully leverage the three-dimensional canvas that immersive mixing provides.

Let’s explore how background vocals can be arranged spatially across different sections of a song. Consider how space functions as an arrangement tool—not just a mixing decision.

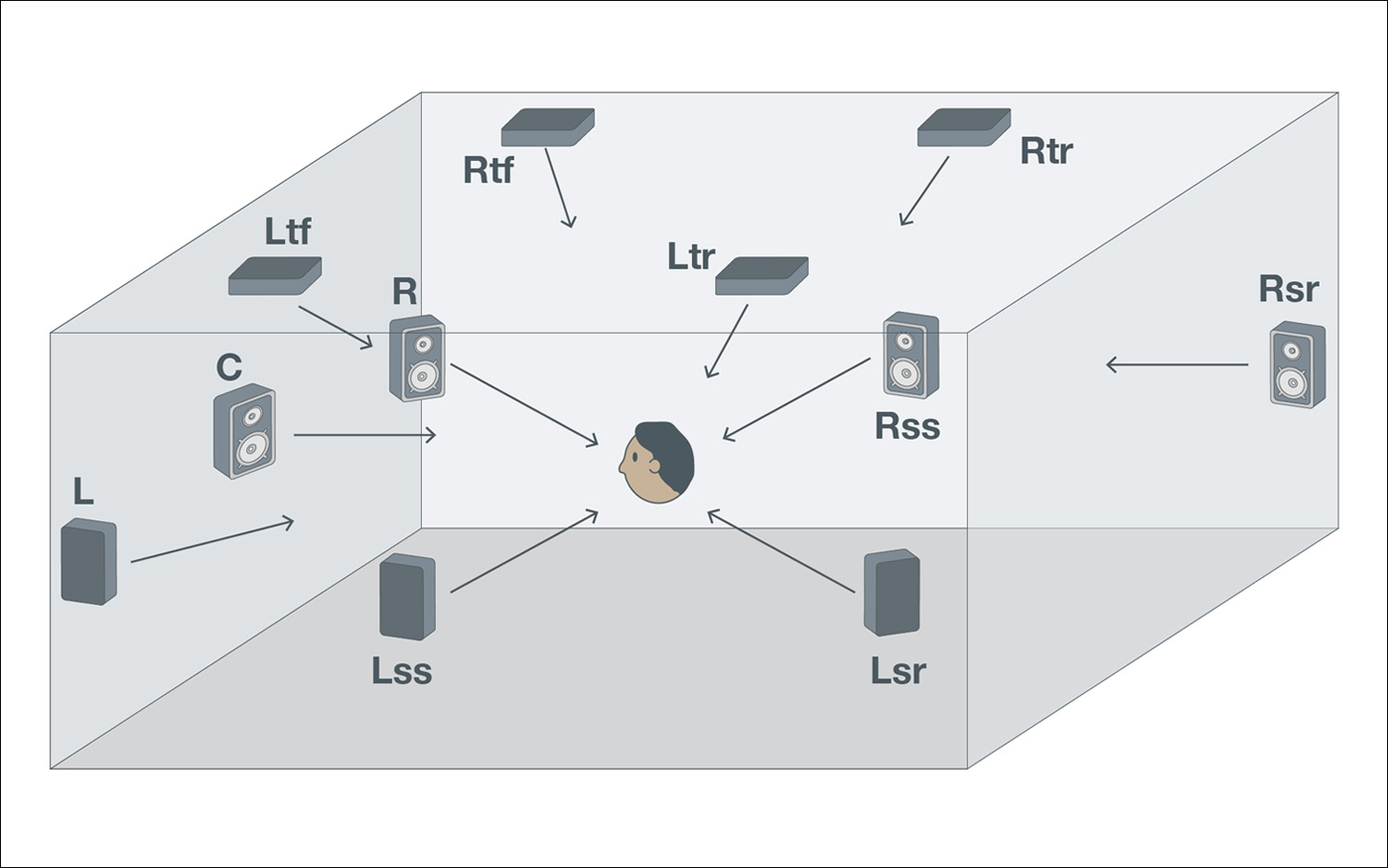

The above graphic represents baseline speaker setup for Atmos of 7.0.4, and it is ideal to be focused on room positioning (3D position or sounds in that space) around the listener rather than being tied to any one particular speaker format.

Here is what each acronym stands for:

L: Left

R: Right

C: Center

Ltf: Left Top Front

Rtf: Right Top Front

Ltr: Left Top Rear

Rtr: Right Top Rear

Lss: Left Side Surround

Rss: Right Side Surround

Lsr: Left Side Rear

Rsr: Right Side Rear

Front Center (Lead Vocal Position)

- Lead Vocal — Front Center

- The lead vocal is typically anchored at the front center of the sound field. Human hearing is most sensitive to speech intelligibility in this position, especially in the 2–5 kHz range.

- Keeping the lead vocal stable and frontal preserves narrative focus and ensures lyrical clarity, even as other elements expand around it.

Front Left and Right (Verse Background Vocals)

- Verse: Intimate Background Vocals

- During verses, background vocals often sit close to the lead—slightly widened in the front left and right channels.

- This placement supports the harmony while maintaining intimacy and focus, without drawing attention away from the lead vocal or overcrowding the mix.

Surround Left and Right (Chorus Expansion)

- Chorus: Horizontal Expansion

- In the chorus, additional background vocal layers can extend into the surround speakers.

- This outward movement increases perceived width and emotional scale, allowing the chorus to feel bigger and more enveloping—without relying solely on increased level or density.

Height Speakers (Vertical Lift)

- Height Layer: Vertical Energy

- Some background vocal layers may be placed partially or fully in the height speakers, especially those with brighter timbre or less lyrical importance.

- Elevating these voices adds a sense of vertical dimension and lift, reinforcing the chorus as a physical and emotional expansion of space.

Full Room (Arrangement Over Time)

- Space as an Arrangement Tool

- As a song moves from verse to chorus, spatial expansion and contraction can function like orchestration or dynamics.

- Rather than stacking more vocals in the same location, immersive formats allow vocal energy to grow by spreading outward and upward—enhancing clarity, impact, and emotional contrast.

Immersive audio asks producers to think about space the same way they think about melody, harmony, or rhythm. Instead of treating spatial placement as a final mixing decision, the most effective immersive productions consider it from the beginning.

Throughout this article we explored a few ways that happens in practice. Motion can guide attention without changing level. Height channels and percussion can add vertical energy and realism. Background vocals can spread across the room to increase clarity and emotional scale.

Taken together, these choices shape how the listener experiences a piece of music. A mix can expand outward during a chorus, collapse inward for a verse, or gently move elements through the soundfield to keep the ear engaged.

When spatial decisions become part of the creative process, immersive formats open up a wider canvas for a song. The music feels larger, more dimensional, and more physically present.